Reinforcement learning (RL) has evolved significantly with the integration of advanced neural network architectures, among which the attention mechanism stands out as a transformative feature. This mechanism, inspired by human cognitive attention, enables RL agents to dynamically focus on the most relevant parts of their input data to make more informed decisions. This article explores how attention mechanisms enhance the capabilities of RL systems, offering insights into their functionality and applications.

What is an Attention Mechanism?

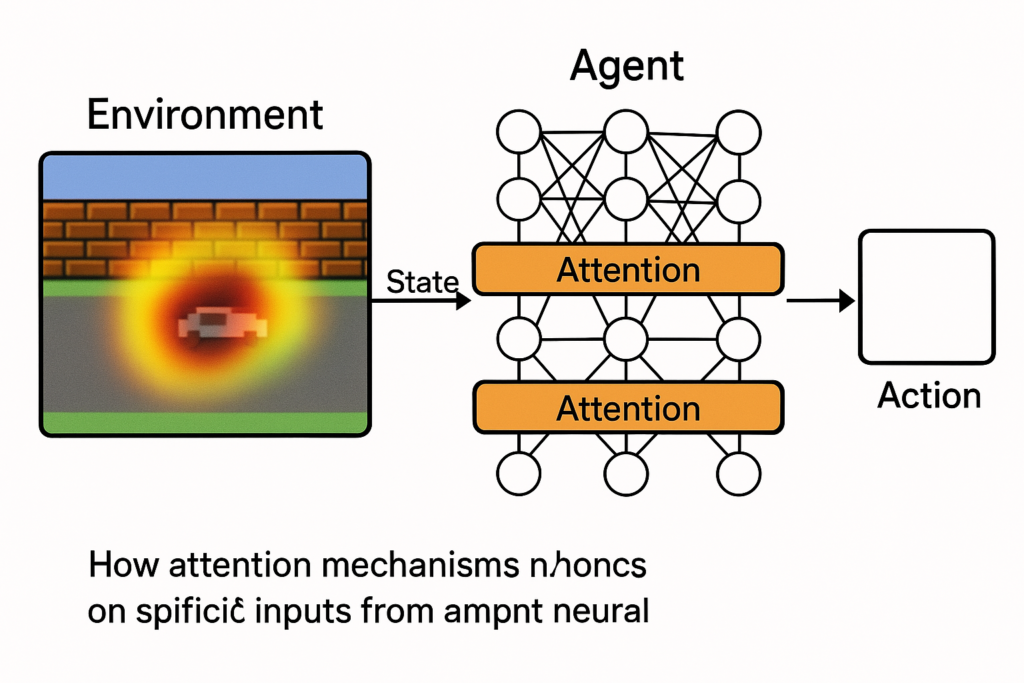

An attention mechanism in reinforcement learning is a component that allows an agent to selectively concentrate on specific aspects of the environment while ignoring others. This selective focus can improve the efficiency and effectiveness of learning processes, especially in complex environments where the relevance of information can vary significantly.

Types of Attention Mechanisms in RL

- Self-Attention: This type of attention assesses the importance of different parts of the input by relating different positions of a single sequence in order to compute a representation of the sequence. Self-attention has been effectively applied in various RL tasks to improve the interpretation of sequential input data, such as in game playing or navigating environments.

- Multi-Agent Attention: In scenarios involving multiple agents, attention mechanisms help by focusing on the actions and states of other agents that might affect an individual agent’s decisions. This is crucial in cooperative tasks where the success of the collective depends on the synchronized actions of all agents involved.

Applications of Attention in Reinforcement Learning

Enhanced Learning Efficiency

Attention mechanisms significantly boost the efficiency of learning processes by reducing the redundancy in the data the models have to process. By focusing on the most relevant information, models can speed up learning and achieve higher performance with fewer data samples.

Improved Policy Generalization

In complex environments with diverse and dynamic challenges, attention helps generalize the learned policies across different settings by focusing on critical features that are most predictive of successful outcomes, thus enhancing the adaptability of RL agents.

Implementation and Performance

Implementing attention in reinforcement learning typically involves integrating it with established RL algorithms like Proximal Policy Optimization (PPO) or Deep Q-Networks (DQN). The attention layer is placed either between or within the neural network layers to modulate the focus on input features dynamically.

Performance evaluations in domains like the Arcade Learning Environment have shown that attention mechanisms can lead to better policy learning compared to traditional RL models. Agents equipped with attention not only learn faster but also achieve higher scores by effectively focusing on game-critical elements.

Challenges and Future Directions

While promising, the integration of attention mechanisms into reinforcement learning also presents challenges. Determining the optimal architecture and tuning the attention components requires significant experimentation and computational resources. Furthermore, understanding and visualizing what the model pays attention to can be complex but is crucial for debugging and improving model designs.

Going forward, we expect to see broader applications of attention in reinforcement learning across more diverse domains, including robotics, autonomous vehicles, and complex simulation environments. Research will likely continue refining the models to handle higher-dimensional data more efficiently and with greater interpretative power.

Conclusion

The integration of attention mechanisms into reinforcement learning represents a significant leap towards creating more intelligent and efficient AI systems. By mimicking aspects of human attention, these systems can focus on what’s most important, learn faster, and perform better across a variety of tasks. As research in this area continues to grow, the potential for transformative impacts across all domains of AI is enormous, promising exciting developments in the years to come.

What is an attention mechanism in reinforcement learning?

In reinforcement learning, an attention mechanism allows an agent to dynamically prioritize parts of the input data that are more relevant for making decisions. This helps in focusing computational resources on important features, improving learning efficiency and performance.

How does attention mechanism improve reinforcement learning models?

Attention mechanisms improve reinforcement learning models by enhancing data processing efficiency, enabling better policy generalization across different environments, and allowing agents to learn faster and perform better by focusing on crucial elements of the environment.

Can attention mechanisms be used in multi-agent systems?

Yes, attention mechanisms are particularly useful in multi-agent systems where they help each agent to selectively consider the actions and states of other agents. This is crucial for coordinating actions and achieving common goals in cooperative tasks.

What are some challenges of integrating attention mechanisms in reinforcement learning?

Challenges include determining the optimal architecture for integrating attention, tuning the system to balance attention effectively, and requiring substantial computational resources for training and experimentation.

What future directions are expected in the development of attention mechanisms in RL?

Future directions include broader applications across diverse domains such as robotics and autonomous driving, and further refinement of the models to handle higher-dimensional data more efficiently.