Recommender systems have become an integral part of the digital landscape, guiding users through an ever-growing sea of choices in e-commerce, streaming platforms, and social media. Traditionally powered by algorithms that rely on user-item interactions, these systems are increasingly being enhanced by Deep Reinforcement Learning (DRL). This article surveys the integration of DRL into recommender systems, examining its advantages, the challenges it addresses, and the new opportunities it presents.

Why DRL for Recommender Systems?

DRL is particularly well-suited for recommender systems due to its dynamic nature and ability to continuously learn and adapt from user interactions. Unlike static models that often require periodic retraining, DRL models can evolve as they interact with users, making them more responsive to changes in user preferences and behavior. This capability allows for more personalized and timely recommendations, which can improve user satisfaction and engagement.

Key Components of DRL-Based Recommender Systems

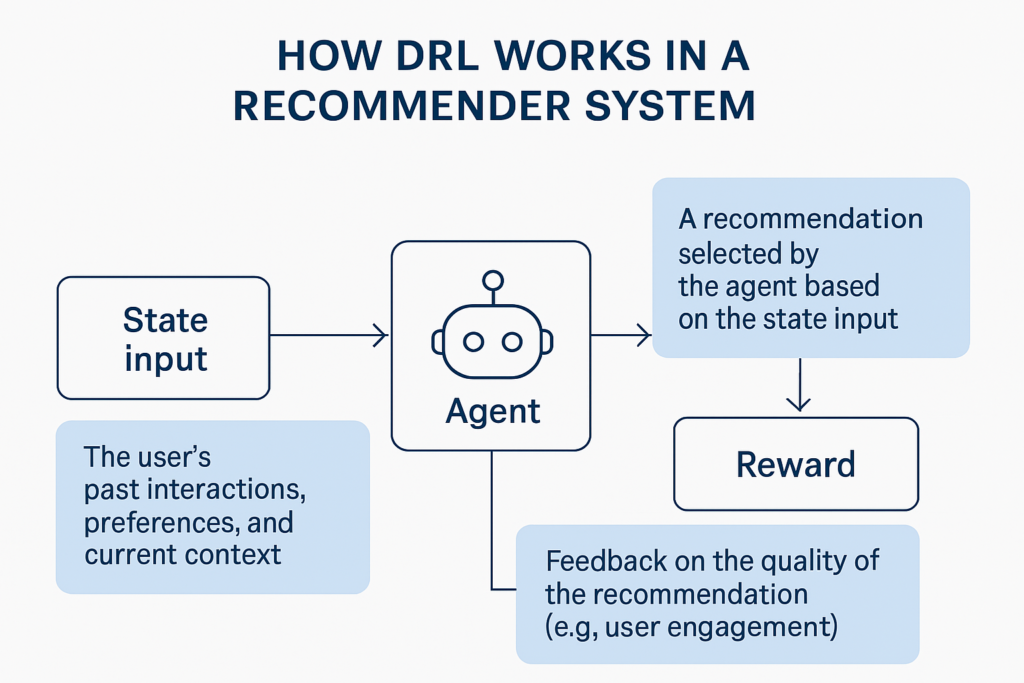

- State Representation: In the context of DRL, the ‘state’ captures the current context of a user, which may include recent interactions, demographic information, and other relevant factors. Effective state representation is crucial as it forms the basis on which the system makes recommendations.

- Action Space: The ‘action’ in DRL-based recommender systems typically involves selecting an item or a set of items to recommend to the user. The complexity of this action space can vary significantly, ranging from recommending a single item at a time to complex scenarios involving multiple items with varying degrees of interaction.

- Reward Mechanism: The reward mechanism is central to the learning process in DRL, guiding the model towards better recommendations. In recommender systems, rewards are often derived from user feedback, such as clicks, purchases, or viewing time, which indicate the user’s preference or satisfaction with the recommendation.

Challenges in Applying DRL to Recommender Systems

Implementing DRL in recommender systems is not without challenges. One major hurdle is the definition and construction of the state and action spaces, which must accurately reflect the nuances of user behavior and preferences. Additionally, modeling the reward system in a way that truly captures the long-term value of recommendations can be complex. There is also the need for scalable solutions that can handle the enormous amount of data typically involved in modern recommender systems.

Current Trends and Future Directions

Recent surveys have categorized the existing DRL-based recommender systems into model-based and model-free approaches. Model-based methods predict potential rewards and actions, whereas model-free methods learn optimal strategies through trial and error. Hybrid approaches that combine elements of both have also been developed. Emerging trends in the field include the use of more sophisticated multi-modal data and the integration of user feedback beyond simple clicks to include measures of long-term satisfaction and engagement.

What is Deep Reinforcement Learning in recommender systems?

Deep Reinforcement Learning (DRL) applies advanced AI techniques to learn and adapt from user interactions in real-time, offering personalized recommendations based on continually updated user data.

Why is DRL suitable for recommender systems?

DRL is ideal for recommender systems because it can handle dynamic environments and continuously learn from new user interactions, allowing for more accurate and personalized recommendations as user preferences evolve.

What are the main components of a DRL-based recommender system?

The key components include state representation (user context), action space (item recommendations), and the reward mechanism (feedback from user actions), which together drive the learning and adaptation process.

What challenges does DRL face in recommender systems?

Challenges include defining accurate state and action spaces, designing effective reward mechanisms, and ensuring the scalability of the system to handle vast amounts of data.

What are the future directions for DRL in recommender systems?

Future research may focus on incorporating more complex user feedback, utilizing multimodal data, and improving the models to handle larger, more dynamic datasets effectively.

Conclusion

Deep reinforcement learning offers exciting new possibilities for recommender systems, promising more personalized and adaptive solutions. As the technology matures, it is poised to significantly enhance how systems understand and cater to individual preferences, transforming the landscape of digital recommendations. The ongoing development in this area represents a vibrant field of research and application, promising substantial benefits for both users and businesses alike.

For a deeper dive into this topic, exploring the cited surveys and research articles can provide more detailed insights and contextual examples of how DRL is being integrated into recommender systems today.